一、介绍

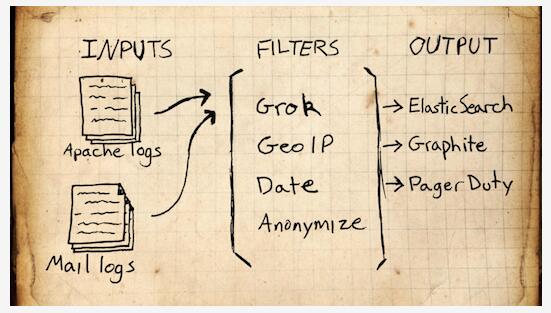

The Elastic Stack – 它不是一个软件,而是Elasticsearch,Logstash,Kibana 开源软件的集合,对外是作为一个日志管理系统的开源方案。它可以从任何来源,任何格式进行日志搜索,分析获取数据,并实时进行展示。像盾牌(安全),监护者(警报)和Marvel(监测)一样为你的产品提供更多的可能。

Elasticsearch:搜索,提供分布式全文搜索引擎

Logstash: 日志收集,管理,存储

Kibana :日志的过滤web 展示

Filebeat:监控日志文件、转发

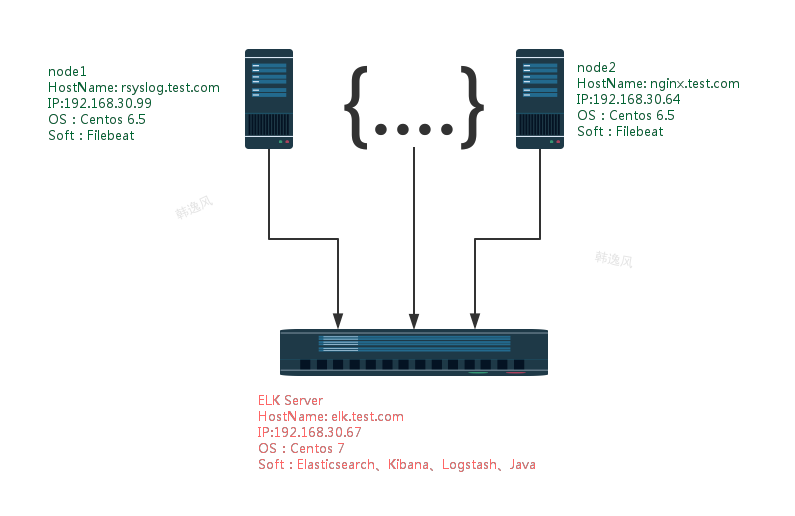

二、测试环境规划图

环境:ip、主机名按照如上规划,系统已经 update. 所有主机时间一致。防火墙测试环境已关闭。下面是这次elk学习的部署安装

目的:通过elk 主机收集监控主要server的系统日志、以及线上应用服务日志。

三、Elasticsearch+Logstash+Kibana的安装(在 elk.test.com 上进行操作)

3.1.基础环境检查

[root@elk ~]# hostname elk.test.com [root@elk ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.30.67 elk.test.com 192.168.30.99 rsyslog.test.com 192.168.30.64 nginx.test.com

3.2.软件包

[root@elk ~]# cd elk/ [root@elk elk]# wget -c https://download.elastic.co/elasticsearch/release/org/elasticsearch/distribution/rpm/elasticsearch/2.3.3/elasticsearch-2.3.3.rpm [root@elk elk]# wget -c https://download.elastic.co/logstash/logstash/packages/centos/logstash-2.3.2-1.noarch.rpm [root@elk elk]# wget https://download.elastic.co/kibana/kibana/kibana-4.5.1-1.x86_64.rpm [root@elk elk]# wget -c https://download.elastic.co/beats/filebeat/filebeat-1.2.3-x86_64.rpm

3.3.检查

[root@elk elk]# ls elasticsearch-2.3.3.rpm filebeat-1.2.3-x86_64.rpm kibana-4.5.1-1.x86_64.rpm logstash-2.3.2-1.noarch.rpm

服务器只需要安装e、l、k, 客户端只需要安装filebeat。

3.4.安装elasticsearch,先安装jdk,elk server 需要java 开发环境支持,由于客户端上使用的是filebeat软件,它不依赖java环境,所以不需要安装。

[root@elk elk]# yum install java-1.8.0-openjdk -y

安装es

[root@elk elk]# yum localinstall elasticsearch-2.3.3.rpm -y ..... Installing : elasticsearch-2.3.3-1.noarch 1/1 ### NOT starting on installation, please execute the following statements to configure elasticsearch service to start automatically using systemd sudo systemctl daemon-reload sudo systemctl enable elasticsearch.service ### You can start elasticsearch service by executing sudo systemctl start elasticsearch.service Verifying : elasticsearch-2.3.3-1.noarch 1/1 Installed: elasticsearch.noarch 0:2.3.3-1

重新载入 systemd,扫描新的或有变动的单元;启动并加入开机自启动

[root@elk elk]# systemctl daemon-reload

[root@elk elk]# systemctl enable elasticsearch

Created symlink from /etc/systemd/system/multi-user.target.wants/elasticsearch.service to /usr/lib/systemd/system/elasticsearch.service.

[root@elk elk]# systemctl start elasticsearch

[root@elk elk]# systemctl status elasticsearch

● elasticsearch.service - Elasticsearch

Loaded: loaded (/usr/lib/systemd/system/elasticsearch.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2016-05-20 15:38:35 CST; 12s ago

Docs: http://www.elastic.co

Process: 10428 ExecStartPre=/usr/share/elasticsearch/bin/elasticsearch-systemd-pre-exec (code=exited, status=0/SUCCESS)

Main PID: 10430 (java)

CGroup: /system.slice/elasticsearch.service

└─10430 /bin/java -Xms256m -Xmx1g -Djava.awt.headless=true -XX:+UseParNewGC -XX:+UseConcMarkSweepGC -XX:CMSInitiatingOccupancy...

May 20 15:38:38 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:38,279][INFO ][env ] [James Howlett] heap...[true]

May 20 15:38:38 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:38,279][WARN ][env ] [James Howlett] max ...65536]

May 20 15:38:41 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:41,726][INFO ][node ] [James Howlett] initialized

May 20 15:38:41 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:41,726][INFO ][node ] [James Howlett] starting ...

May 20 15:38:41 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:41,915][INFO ][transport ] [James Howlett] publ...:9300}

May 20 15:38:41 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:41,920][INFO ][discovery ] [James Howlett] elas...xx35hw

May 20 15:38:45 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:45,099][INFO ][cluster.service ] [James Howlett] new_...eived)

May 20 15:38:45 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:45,164][INFO ][gateway ] [James Howlett] reco..._state

May 20 15:38:45 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:45,185][INFO ][http ] [James Howlett] publ...:9200}

May 20 15:38:45 elk.test.com elasticsearch[10430]: [2016-05-20 15:38:45,185][INFO ][node ] [James Howlett] started

Hint: Some lines were ellipsized, use -l to show in full.

检查服务

[root@elk elk]# rpm -qc elasticsearch /etc/elasticsearch/elasticsearch.yml /etc/elasticsearch/logging.yml /etc/init.d/elasticsearch /etc/sysconfig/elasticsearch /usr/lib/sysctl.d/elasticsearch.conf /usr/lib/systemd/system/elasticsearch.service /usr/lib/tmpfiles.d/elasticsearch.conf [root@elk elk]# netstat -nltp | grep java tcp6 0 0 127.0.0.1:9200 :::* LISTEN 10430/java tcp6 0 0 ::1:9200 :::* LISTEN 10430/java tcp6 0 0 127.0.0.1:9300 :::* LISTEN 10430/java tcp6 0 0 ::1:9300 :::* LISTEN 10430/java

修改防火墙,将9200、9300 端口对外开放

[root@elk elk]# firewall-cmd --permanent --add-port={9200/tcp,9300/tcp}

success

[root@elk elk]# firewall-cmd --reload

success

[root@elk elk]# firewall-cmd --list-all

public (default, active)

interfaces: eno16777984 eno33557248

sources:

services: dhcpv6-client ssh

ports: 9200/tcp 9300/tcp

masquerade: no

forward-ports:

icmp-blocks:

rich rules:

3.5 安装kibana

[root@elk elk]# yum localinstall kibana-4.5.1-1.x86_64.rpm –y

[root@elk elk]# systemctl enable kibana

Created symlink from /etc/systemd/system/multi-user.target.wants/kibana.service to /usr/lib/systemd/system/kibana.service.

[root@elk elk]# systemctl start kibana

[root@elk elk]# systemctl status kibana

● kibana.service - no description given

Loaded: loaded (/usr/lib/systemd/system/kibana.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2016-05-20 15:49:02 CST; 20s ago

Main PID: 11260 (node)

CGroup: /system.slice/kibana.service

└─11260 /opt/kibana/bin/../node/bin/node /opt/kibana/bin/../src/cli

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:elasticsearch...

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:kbn_vi...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:markdo...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:metric...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:spyMod...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:status...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["status","plugin:table_...lized"}

May 20 15:49:05 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:05+00:00","tags":["listening","info"],"pi...:5601"}

May 20 15:49:10 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:10+00:00","tags":["status","plugin:elasticsearch...

May 20 15:49:14 elk.test.com kibana[11260]: {"type":"log","@timestamp":"2016-05-20T07:49:14+00:00","tags":["status","plugin:elasti...found"}

Hint: Some lines were ellipsized, use -l to show in full.

检查kibana服务运行(Kibana默认 进程名:node ,端口5601)

[root@elk elk]# netstat -nltp Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 909/sshd tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN 1595/master tcp 0 0 0.0.0.0:5601 0.0.0.0:* LISTEN 11260/node

修改防火墙,对外开放tcp/5601

[root@elk elk]# firewall-cmd --permanent --add-port=5601/tcp Success [root@elk elk]# firewall-cmd --reload success [root@elk elk]# firewall-cmd --list-all public (default, active) interfaces: eno16777984 eno33557248 sources: services: dhcpv6-client ssh ports: 9200/tcp 9300/tcp 5601/tcp masquerade: no forward-ports: icmp-blocks: rich rules:

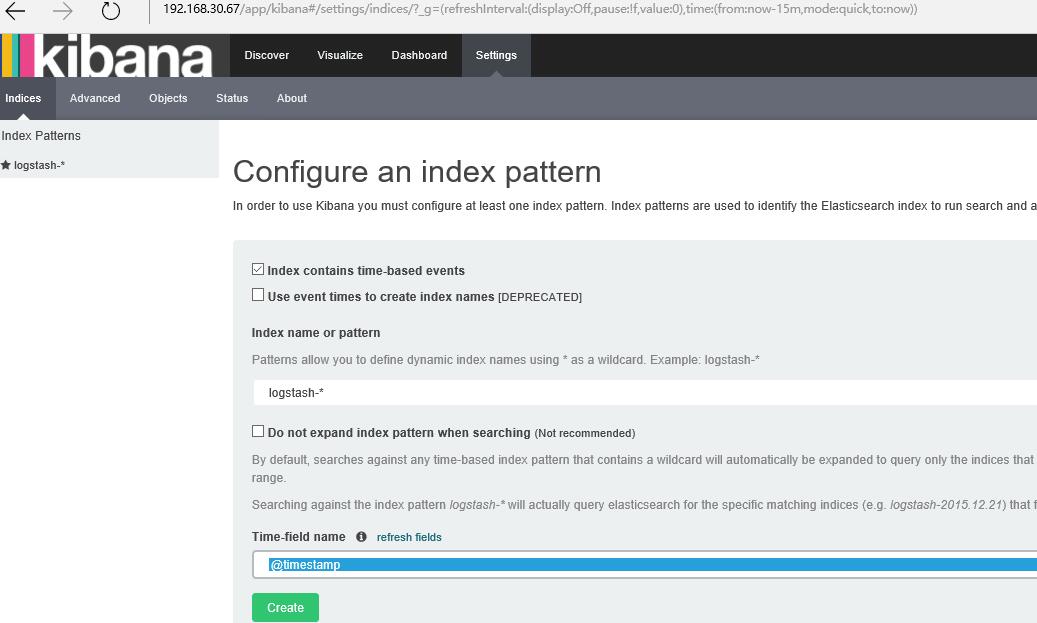

这时,我们可以打开浏览器,测试访问一下kibana服务器http://192.168.30.67:5601/,确认没有问题,如下图:

在这里,我们可以修改防火墙,将用户访问80端口连接转发到5601上,这样可以直接输入网址不用指定端口了,如下:

[root@elk elk]# firewall-cmd --permanent --add-forward-port=port=80:proto=tcp:toport=5601 [root@elk elk]# firewall-cmd --reload [root@elk elk]# firewall-cmd --list-all public (default, active) interfaces: eno16777984 eno33557248 sources: services: dhcpv6-client ssh ports: 9200/tcp 9300/tcp 5601/tcp masquerade: no forward-ports: port=80:proto=tcp:toport=5601:toaddr= icmp-blocks: rich rules:

3.6 安装logstash,以及添加配置文件

[root@elk elk]# yum localinstall logstash-2.3.2-1.noarch.rpm –y

生成证书

[root@elk elk]# cd /etc/pki/tls/ [root@elk tls]# ls cert.pem certs misc openssl.cnf private [root@elk tls]# openssl req -subj '/CN=elk.test.com/' -x509 -days 3650 -batch -nodes -newkey rsa:2048 -keyout private/logstash-forwarder.key -out certs/logstash-forwarder.crt Generating a 2048 bit RSA private key ...................................................................+++ ......................................................+++ writing new private key to 'private/logstash-forwarder.key' -----

之后创建logstash 的配置文件。如下:

[root@elk ~]# cat /etc/logstash/conf.d/01-logstash-initial.conf

input {

beats {

port => 5000

type => "logs"

ssl => true

ssl_certificate => "/etc/pki/tls/certs/logstash-forwarder.crt"

ssl_key => "/etc/pki/tls/private/logstash-forwarder.key"

}

}

filter {

if [type] == "syslog-beat" {

grok {

match => { "message" => "%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_hostname} %{DATA:syslog_program}(?:\[%{POSINT:syslog_pid}\])?: %{GREEDYDATA:syslog_message}" }

add_field => [ "received_at", "%{@timestamp}" ]

add_field => [ "received_from", "%{host}" ]

}

geoip {

source => "clientip"

}

syslog_pri {}

date {

match => [ "syslog_timestamp", "MMM d HH:mm:ss", "MMM dd HH:mm:ss" ]

}

}

}

output {

elasticsearch { }

stdout { codec => rubydebug }

}

启动logstash,并检查端口,配置文件里,我们写的是5000端口

[root@elk conf.d]# systemctl start logstash [root@elk elk]# /sbin/chkconfig logstash on [root@elk conf.d]# netstat -ntlp Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 909/sshd tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN 1595/master tcp 0 0 0.0.0.0:5601 0.0.0.0:* LISTEN 11260/node tcp 0 0 0.0.0.0:514 0.0.0.0:* LISTEN 618/rsyslogd tcp6 0 0 :::5000 :::* LISTEN 12819/java tcp6 0 0 :::3306 :::* LISTEN 1270/mysqld tcp6 0 0 127.0.0.1:9200 :::* LISTEN 10430/java tcp6 0 0 ::1:9200 :::* LISTEN 10430/java tcp6 0 0 127.0.0.1:9300 :::* LISTEN 10430/java tcp6 0 0 ::1:9300 :::* LISTEN 10430/java tcp6 0 0 :::22 :::* LISTEN 909/sshd tcp6 0 0 ::1:25 :::* LISTEN 1595/master tcp6 0 0 :::514 :::* LISTEN 618/rsyslogd

修改防火墙,将5000端口对外开放。

[root@elk ~]# firewall-cmd --permanent --add-port=5000/tcp success [root@elk ~]# firewall-cmd --reload success [root@elk ~]# firewall-cmd --list-all public (default, active) interfaces: eno16777984 eno33557248 sources: services: dhcpv6-client ssh ports: 9200/tcp 9300/tcp 5000/tcp 5601/tcp masquerade: no forward-ports: port=80:proto=tcp:toport=5601:toaddr= icmp-blocks: rich rules:

3.7 修改elasticsearch 配置文件

查看目录,创建文件夹es-01(名字不是必须的),logging.yml是自带的,elasticsearch.yml是创建的文件,内如见下:

[root@elk ~]# cd /etc/elasticsearch/ [root@elk elasticsearch]# tree . ├── es-01 │ ├── elasticsearch.yml │ └── logging.yml └── scripts

[root@elk elasticsearch]# cat es-01/elasticsearch.yml ---- http: port: 9200 network: host: elk.test.com node: name: elk.test.com path: data: /etc/elasticsearch/data/es-01

3.8 重启elasticsearch、logstash服务。

3.9 将 fiebeat安装包拷贝到 rsyslog、nginx 客户端上

[root@elk elk]# scp filebeat-1.2.3-x86_64.rpm root@rsyslog.test.com:/root/elk [root@elk elk]# scp filebeat-1.2.3-x86_64.rpm root@nginx.test.com:/root/elk [root@elk elk]# scp /etc/pki/tls/certs/logstash-forwarder.crt rsyslog.test.com:/root/elk [root@elk elk]# scp /etc/pki/tls/certs/logstash-forwarder.crt nginx.test.com:/root/elk

四、客户端部署filebeat(在rsyslog、nginx客户端上操作)

filebeat客户端是一个轻量级的,从服务器上的文件收集日志资源的工具,这些日志转发到处理到Logstash服务器上。该Filebeat客户端使用安全的Beats协议与Logstash实例通信。lumberjack协议被设计为可靠性和低延迟。Filebeat使用托管源数据的计算机的计算资源,并且Beats输入插件尽量减少对Logstash的资源需求。

4.1.(node1)安装filebeat,拷贝证书,创建收集日志配置文件

[root@rsyslog elk]# yum localinstall filebeat-1.2.3-x86_64.rpm -y #拷贝证书到本机指定目录中 [root@rsyslog elk]# cp logstash-forwarder.crt /etc/pki/tls/certs/. [root@rsyslog elk]# cd /etc/filebeat/ [root@rsyslog filebeat]# tree . ├── conf.d │ ├── authlogs.yml │ └── syslogs.yml ├── filebeat.template.json └── filebeat.yml 1 directory, 4 files

修改的文件有3个,filebeat.yml,是定义连接logstash 服务器的配置。conf.d目录下的2个配置文件是自定义监控日志的,下面看下各自的内容:

filebeat.yml

[root@rsyslog filebeat]# cat filebeat.yml

filebeat:

spool_size: 1024

idle_timeout: 5s

registry_file: .filebeat

config_dir: /etc/filebeat/conf.d

output:

logstash:

hosts:

- elk.test.com:5000

tls:

certificate_authorities: ["/etc/pki/tls/certs/logstash-forwarder.crt"]

enabled: true

shipper: {}

logging: {}

runoptions: {}

authlogs.yml & syslogs.yml

[root@rsyslog filebeat]# cat conf.d/authlogs.yml

filebeat:

prospectors:

- paths:

- /var/log/secure

encoding: plain

fields_under_root: false

input_type: log

ignore_older: 24h

document_type: syslog-beat

scan_frequency: 10s

harvester_buffer_size: 16384

tail_files: false

force_close_files: false

backoff: 1s

max_backoff: 1s

backoff_factor: 2

partial_line_waiting: 5s

max_bytes: 10485760

[root@rsyslog filebeat]# cat conf.d/syslogs.yml

filebeat:

prospectors:

- paths:

- /var/log/messages

encoding: plain

fields_under_root: false

input_type: log

ignore_older: 24h

document_type: syslog-beat

scan_frequency: 10s

harvester_buffer_size: 16384

tail_files: false

force_close_files: false

backoff: 1s

max_backoff: 1s

backoff_factor: 2

partial_line_waiting: 5s

max_bytes: 10485760

修改完成后,启动filebeat服务

[root@rsyslog filebeat]# service filebeat start Starting filebeat: [ OK ] [root@rsyslog filebeat]# chkconfig filebeat on [root@rsyslog filebeat]# netstat -altp Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 localhost:25151 *:* LISTEN 6230/python2 tcp 0 0 *:ssh *:* LISTEN 5509/sshd tcp 0 0 localhost:ipp *:* LISTEN 1053/cupsd tcp 0 0 localhost:smtp *:* LISTEN 1188/master tcp 0 0 rsyslog.test.com:51155 elk.test.com:commplex-main ESTABLISHED 7443/filebeat tcp 0 52 rsyslog.test.com:ssh 192.168.30.65:10580 ESTABLISHED 7164/sshd tcp 0 0 *:ssh *:* LISTEN 5509/sshd tcp 0 0 localhost:ipp *:* LISTEN 1053/cupsd tcp 0 0 localhost:smtp *:* LISTEN 1188/master

如果连接不上,状态不正常的话,检查下客户端的防火墙。

4.2. (node2)安装filebeat,拷贝证书,创建收集日志配置文件

[root@nginx elk]# yum localinstall filebeat-1.2.3-x86_64.rpm -y [root@nginx elk]# cp logstash-forwarder.crt /etc/pki/tls/certs/. [root@nginx elk]# cd /etc/filebeat/ [root@nginx filebeat]# tree . ├── conf.d │ ├── nginx.yml │ └── syslogs.yml ├── filebeat.template.json └── filebeat.yml 1 directory, 4 files

修改filebeat.yml 内容如下:

[root@rsyslog filebeat]# cat filebeat.yml

filebeat:

spool_size: 1024

idle_timeout: 5s

registry_file: .filebeat

config_dir: /etc/filebeat/conf.d

output:

logstash:

hosts:

- elk.test.com:5000

tls:

certificate_authorities: ["/etc/pki/tls/certs/logstash-forwarder.crt"]

enabled: true

shipper: {}

logging: {}

runoptions: {}

syslogs.yml & nginx.yml

[root@nginx filebeat]# cat conf.d/syslogs.yml

filebeat:

prospectors:

- paths:

- /var/log/messages

encoding: plain

fields_under_root: false

input_type: log

ignore_older: 24h

document_type: syslog-beat

scan_frequency: 10s

harvester_buffer_size: 16384

tail_files: false

force_close_files: false

backoff: 1s

max_backoff: 1s

backoff_factor: 2

partial_line_waiting: 5s

max_bytes: 10485760

[root@nginx filebeat]# cat conf.d/nginx.yml

filebeat:

prospectors:

- paths:

- /var/log/nginx/access.log

encoding: plain

fields_under_root: false

input_type: log

ignore_older: 24h

document_type: syslog-beat

scan_frequency: 10s

harvester_buffer_size: 16384

tail_files: false

force_close_files: false

backoff: 1s

max_backoff: 1s

backoff_factor: 2

partial_line_waiting: 5s

max_bytes: 10485760

修改完成后,启动filebeat服务,并检查filebeat进程

[root@nginx filebeat]# service filebeat start Starting filebeat: [ OK ] [root@nginx filebeat]# chkconfig filebeat on [root@nginx filebeat]# netstat -aulpt Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 *:ssh *:* LISTEN 1076/sshd tcp 0 0 localhost:smtp *:* LISTEN 1155/master tcp 0 0 *:http *:* LISTEN 1446/nginx tcp 0 52 nginx.test.com:ssh 192.168.30.65:11690 ESTABLISHED 1313/sshd tcp 0 0 nginx.test.com:49500 elk.test.com:commplex-main ESTABLISHED 1515/filebeat tcp 0 0 nginx.test.com:ssh 192.168.30.65:6215 ESTABLISHED 1196/sshd tcp 0 0 nginx.test.com:ssh 192.168.30.65:6216 ESTABLISHED 1200/sshd tcp 0 0 *:ssh *:* LISTEN 1076/sshd

通过上面可以看出,客户端filebeat进程已经和 elk 服务器连接了。下面去验证。

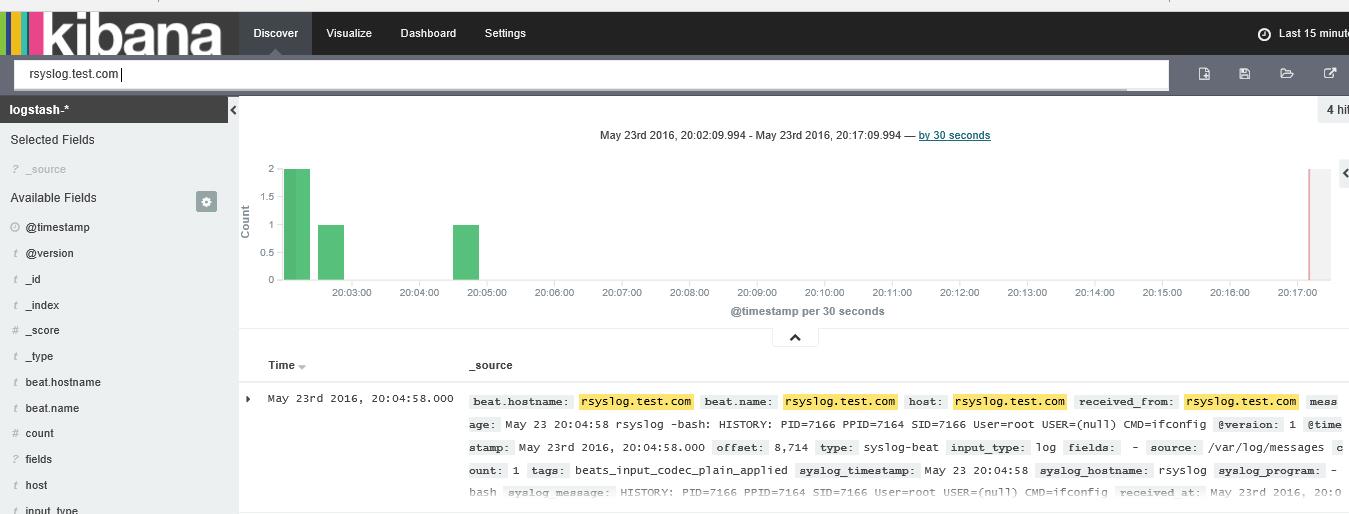

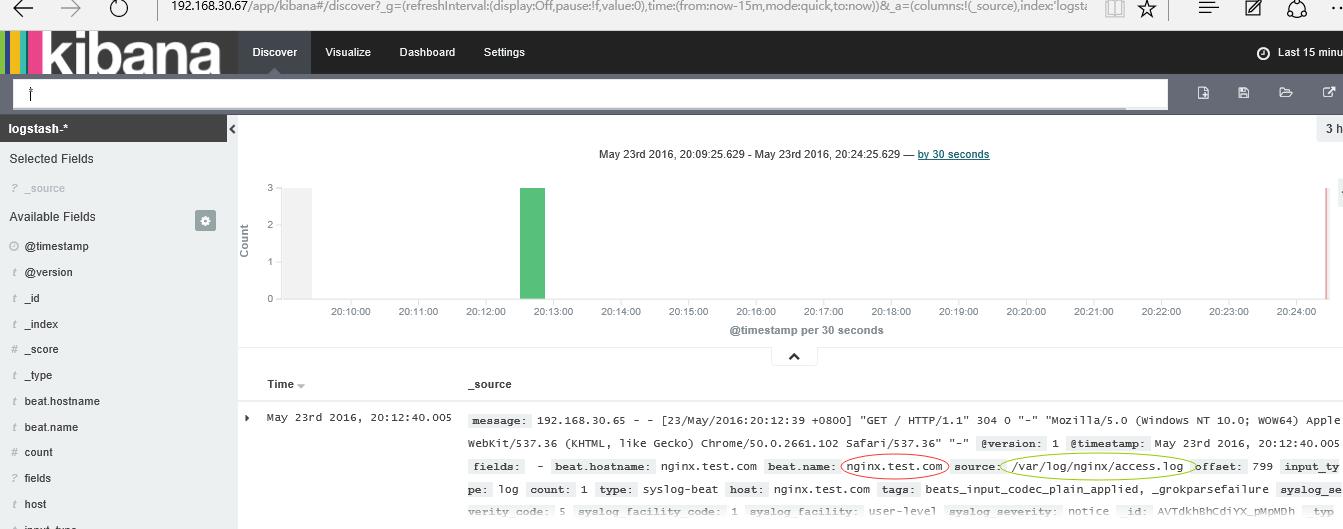

五、验证,访问kibana http://192.168.30.67

5.1 设置下

查看下两台机器的系统日志:node1的

node2的nginx 访问日志

六、体验

之前在学习rsyslog +LogAnalyzer,然后又学了这个之后,发现elk 不管从整体系统,还是体验都是不错的,而且更新快。后续会继续学习,更新相关的监控过滤日志方法,日志分析,以及使用kafka 来进行存储的架构。

本文章属于原创,如果觉得有价值,转载时请注明出处。谢谢

参考网站:https://www.elastic.co/products/elasticsearch

https://www.elastic.co/downloads